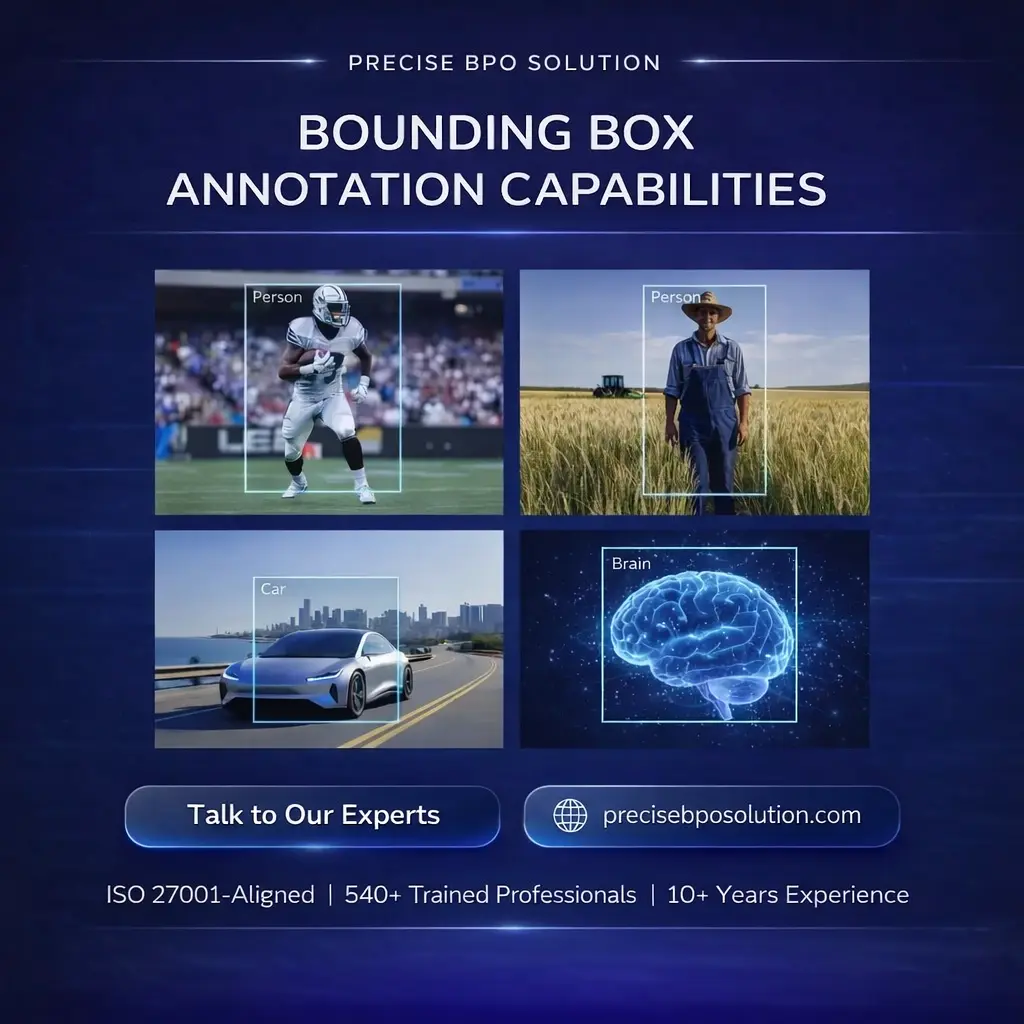

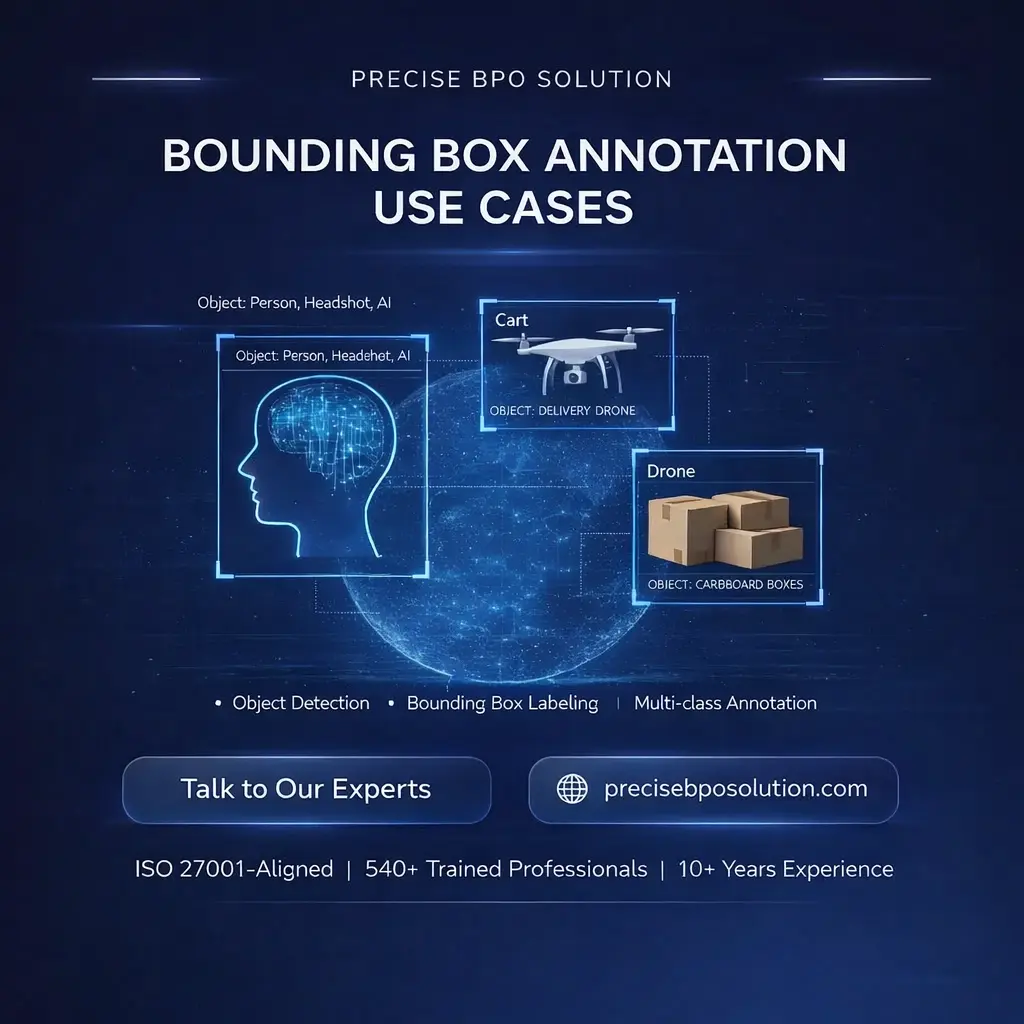

Bounding box annotation provides precise object-level labeling for detection, tracking, and localization tasks across images and videos. These carefully drawn boxes define object boundaries, improve detection accuracy, support multi-class classification, and enhance AI model performance in computer vision pipelines. High-quality bounding boxes reduce false positives, improve recall, and accelerate enterprise AI deployment.

Precise BPO India combines 10+ years of experience with 540+ expert annotators to deliver large-scale object detection labeling, object detection datasets, and AI labeling services for global AI teams. Our workflows cover SBU, MBU, and enterprise projects, including class hierarchy setup, annotation guidelines, placement rules, IoU standards, and frame-level annotation quality checks to ensure consistency across object types and environments.

We have processed 810M+ images across various projects, including 390M+ object-level labeling tasks across automotive, healthcare, retail, agriculture, geospatial, sports, robotics, and industrial AI domains. This massive dataset volume supports training advanced detection models, multi-stage AI pipelines, and versioned workflows while maintaining high accuracy and strict data security.

ISO-aligned controls, automated audits, IoU scoring, reviewer checks, and sampling guarantee precise, high-volume object detection annotation. Serving US, UK, EU, ME, APAC, and LATAM, Precise BPO India delivers scalable, cost-efficient object detection datasets and AI labeling services for global enterprise AI projects.